Your AI Tools Have More Access Than You Think.

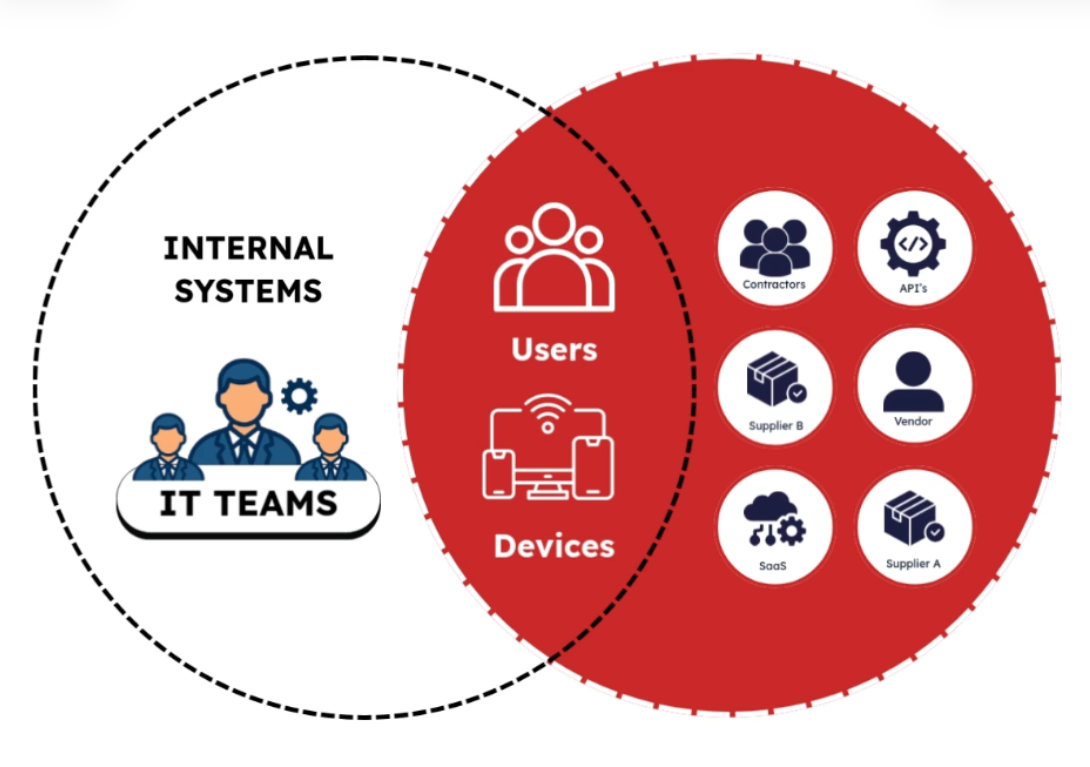

AI tools are spreading across organizations faster than most security teams can track. Each OAuth login or SaaS integration can quietly create persistent access to company data, often without centralized visibility.

AI adoption is accelerating across every team. So is something else: a quiet, growing network of external access to your most sensitive data, and most organizations can't see it clearly.

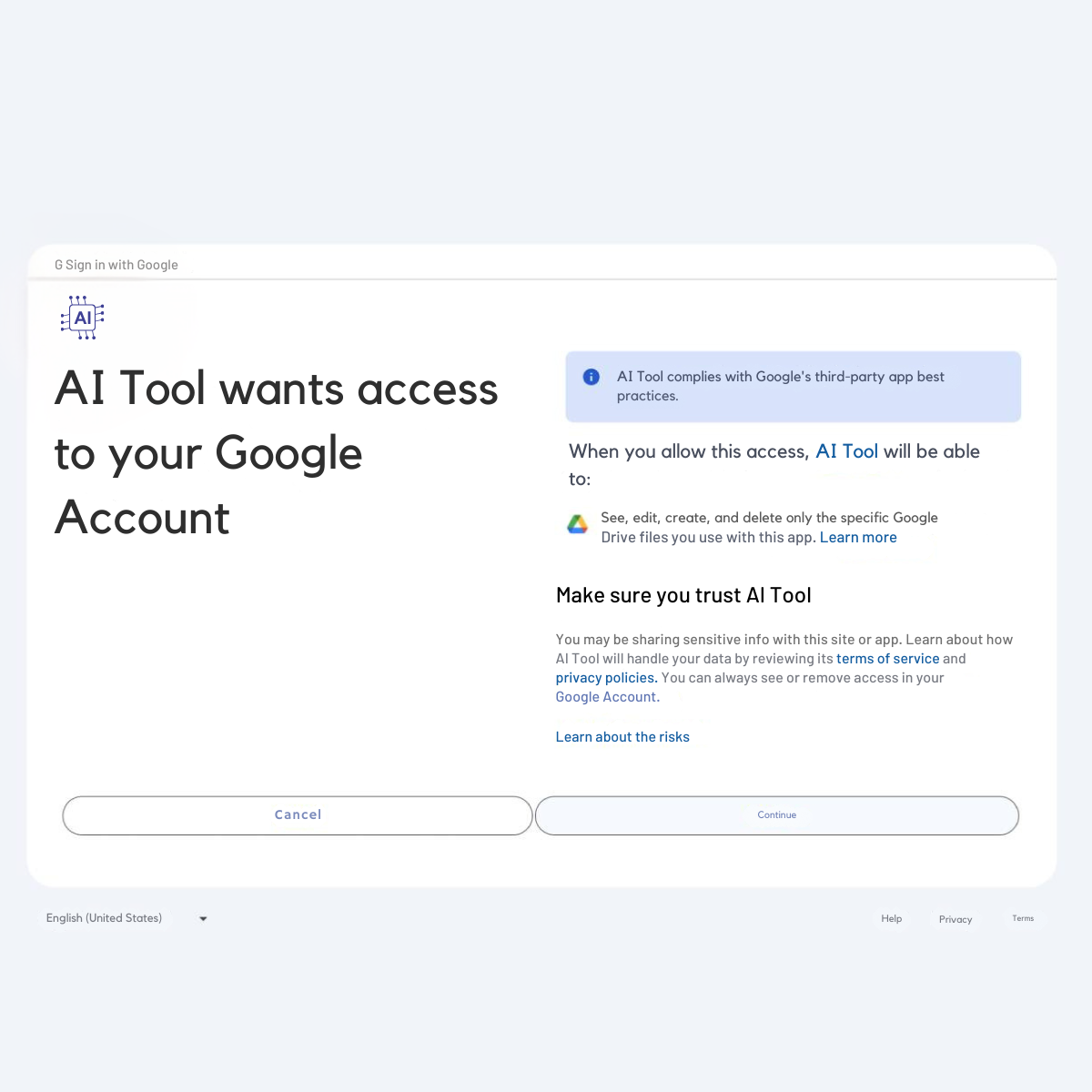

It starts with a button.

A sales rep connects an AI email assistant to the CRM. A marketing manager links a design tool to cloud storage. A developer hooks up an AI research assistant to internal documentation to save an afternoon of searching.

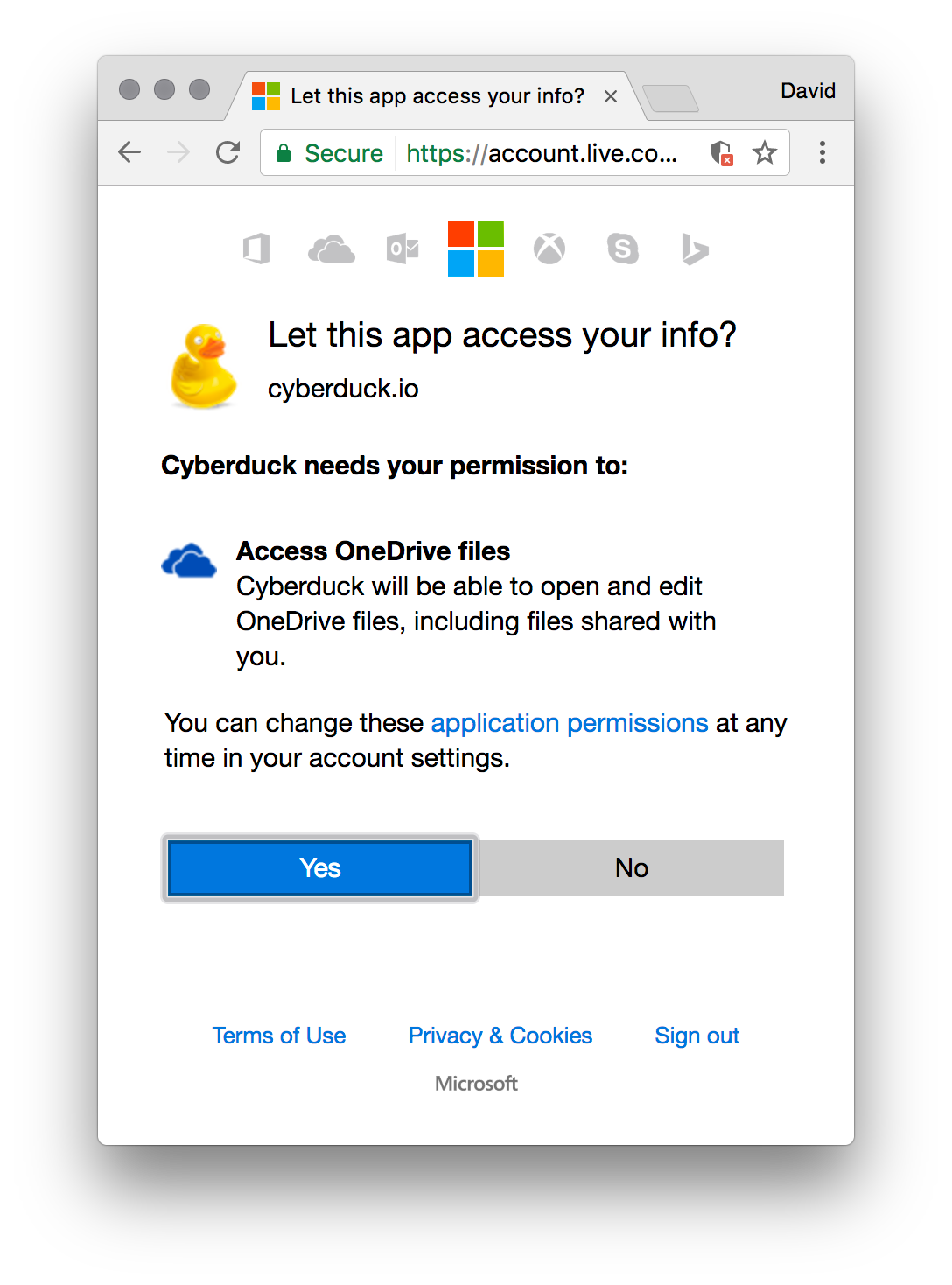

None of it feels unusual. Each action takes seconds. A quick OAuth prompt. A "Sign in with Google or Microsoft" click. Done.

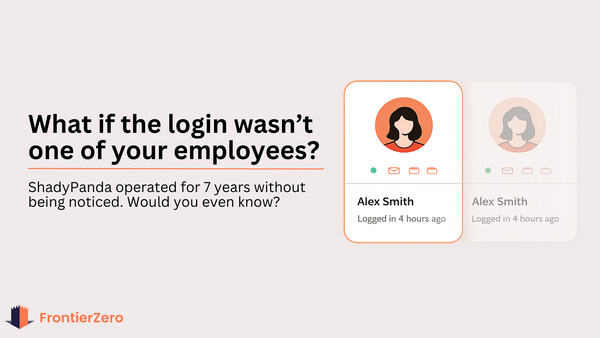

But here's what actually happened: a new external identity just entered your SaaS environment, often with persistent access that nobody formally approved.

Multiply that by every team in your organization. Now multiply it by twelve months of quiet experimentation.

This is AI sprawl. But it's not really an AI problem. It's an access problem.

Why security teams can't see it, and it's not their fault.

Unlike traditional software vendors, AI tools rarely go through procurement. They appear as integrations scattered across Google Workspace, Salesforce, Notion, Slack, GitHub, and internal wikis.

Each platform tracks its own integrations. Microsoft's security tooling gives you visibility into Microsoft workloads. Your CRM shows you what's connected to your CRM. But no single console shows you the full picture across all of them at once.

So when a security team tries to map their exposure, the challenge isn't analysis. It's that the data is fragmented across a dozen different admin consoles.

The result is something most leaders would find uncomfortable to say out loud:

"I know we're using AI tools. I'm not entirely sure which ones have access to what."

What this looks like in practice.

Consider a mid-size SaaS company — 300 employees, multiple active teams. Over 18 months, various teams had adopted AI tools independently. When they finally ran a cross-platform access audit, they found:

47 active OAuth tokens from AI tools — including 12 from tools no longer actively used.

3 AI assistants with read access to customer data records in the CRM.

An AI automation platform connected to 6 different SaaS apps, two of which had been deprecated.

No single person who had visibility into all of it.

Nobody had done anything wrong. Each integration made sense at the time. But over time, they had created a sprawling network of access that nobody was managing, because nobody could see it in one place.

Five questions worth asking your team

You don't need a full security overhaul to start getting clarity. These questions will tell you a lot about where you stand:

→ Which AI tools are currently connected to our SaaS environment?

Not just the ones IT approved — all of them, including personal tools that employees connected themselves.

→ Do we have OAuth tokens from tools we no longer actively use?

Access doesn't expire when a trial ends. Persistent tokens are common and easy to miss.

→ Which AI integrations have access to customer data?

CRM connections, email assistants, and data tools are the most common exposure points.

→ Can we see AI activity across Microsoft and non-Microsoft environments in one place?

If the answer is no, you have a visibility gap, not a policy gap.

→ When did we last review which third-party integrations are still active?

Most organizations don't have a regular cadence for this. Most also can't say what's changed in the last 90 days.

If you can't answer most of these confidently, you're not alone, and you're not in a uniquely bad position. But you do have a gap worth closing.

The goal isn't to slow AI adoption.

Teams that block AI tools in the name of security don't win. The tools find their way in anyway, just with less visibility.

The organizations that manage this well aren't necessarily running tighter controls. They're running with better visibility. They know which identities, human and AI, have access to what, across their entire SaaS ecosystem.

Unified visibility isn't a luxury anymore. It's the baseline for understanding your actual risk posture.

Context-based security (understanding not just who has access, but how that access is being used) is one of the more practical frameworks for getting there. If you'd like to explore what that looks like in practice, we've written more about it here: https://learn.frontierzero.io/what-is-context-based-security/