How One AI Tool Brought Down Vercel's Security

An AI tool with OAuth access. A trusted session. No alerts. The Vercel breach shows how Shadow AI is becoming the easiest way in.

Last week, Vercel confirmed a breach.

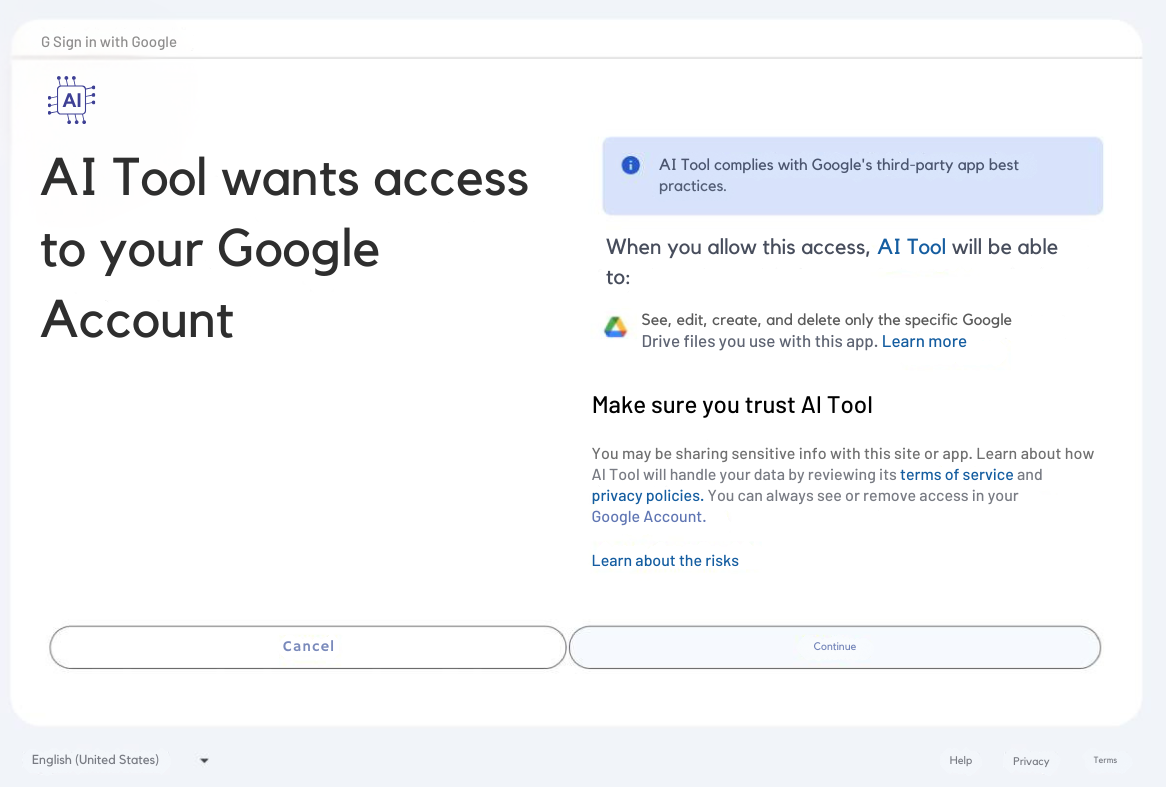

Not through a vulnerability in their own systems. Not through a phishing email that caught someone off guard. Through an AI tool that one of their employees was using. The tool was Context.ai, and it had OAuth access to Google Workspace.

Attackers compromised it. And walked straight in.

No alarm triggered. No perimeter crossed. Just a connected AI tool that nobody in security was watching, and a door that had been open for longer than anyone knew.

We've been talking about third-party risk for a while now. Vendor breaches, supply chain attacks, and external connections. But the Vercel breach signals something that deserves its own conversation.

Shadow AI is becoming the new entry point. And most security programmes aren't built to see it yet.

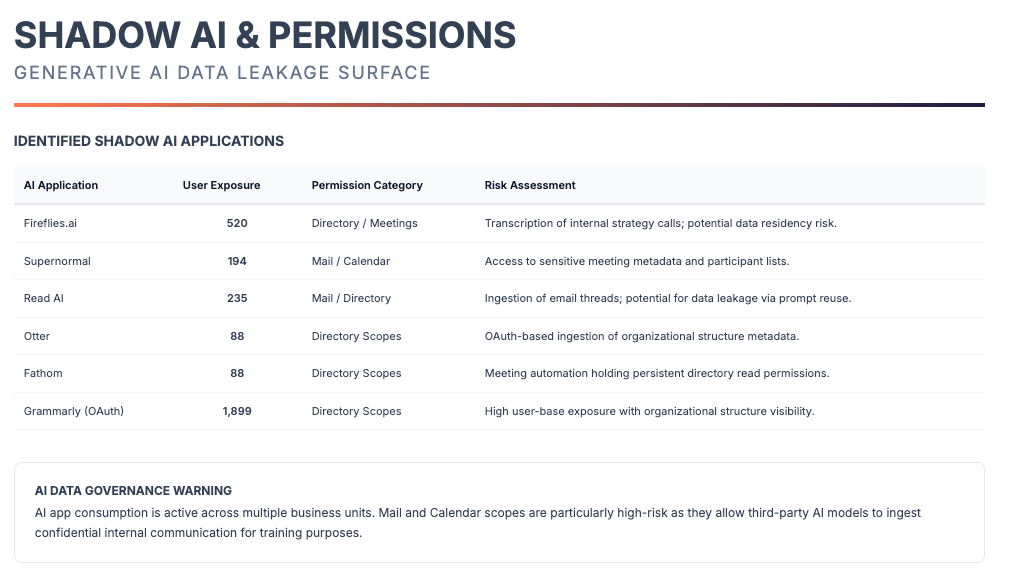

What Shadow AI actually looks like inside your organisation

Shadow AI isn't one thing. It doesn't announce itself. It doesn't show up in a procurement report or a security audit. It accumulates quietly, department by department, one OAuth approval at a time.

Here's what it actually looks like in practice.

Your marketing team is using an AI content platform connected to Google Drive to generate campaign copy. It has read access to shared drives that contain customer lists, contract templates, and internal pricing documents. Nobody in IT approved it. Nobody in security knows it exists. The marketer who connected it left the company three months ago. The integration is still active.

Your finance team connected an AI tool to automate invoice processing. It has access to your accounting software, your bank transaction data, and your payroll system. It was connected during a busy quarter when the team was under pressure to move faster. It solved a real problem. It was never reviewed.

Your sales team is using an AI assistant integrated with your CRM. It reads deal data, customer contacts, and communication history. Three different sales reps connected three different AI tools to the same CRM over the past six months. Each one has standing access. None of them has been audited. Also, don't forget the dozen meeting note takers that have access to their calendar.

Your engineering team connected an AI coding assistant to GitHub. It has access to private repositories, internal tooling, and deployment scripts. The developer who set it up thought it was a personal tool. It isn't. It's connected to the company infrastructure with the company credentials.

None of these people did anything wrong. Every one of them made a reasonable decision to move faster and do their job better. But every one of those connections is now an identity inside your SaaS environment, with access to sensitive data, sitting outside the perimeter of your security programme, and completely invisible to your team.

This is Shadow AI. And it is not a future risk. It is the current state of most organisations right now.

Why is this different from the Shadow IT problem you've seen before

Security teams have been dealing with Shadow IT for years. Employees using Dropbox instead of SharePoint. Personal Gmail accounts forwarding work emails. Unapproved apps on company devices.

Shadow AI is that problem at a different speed and a different scale.

Traditional Shadow IT required effort. An employee had to download software, set up an account, and move files manually. The friction created natural limits on how far it spread.

AI tools remove that friction entirely.

A new AI platform can be connected to Google Workspace in under a minute with a single OAuth click. No installation. No IT ticket. No visible footprint on any endpoint your security tools are monitoring. The employee doesn't think of it as a security decision. They think of it as a productivity decision. And they're right, it usually is. But the security implications are real regardless of the intent.

The volume is what makes this different. We are not talking about one or two employees connecting one or two tools. We are talking about dozens of tools, across every department, connected by people who have no reason to think twice about it, because the friction that used to create a natural checkpoint has been completely removed.

And almost every single one of those connections has OAuth access to something.

What happens when one of those tools gets compromised

This is where the Vercel story becomes a direct lesson rather than a distant headline.

Context.ai was not a rogue tool. It was not obviously dangerous. It was a legitimate AI analytics platform that a Vercel employee was using to do their job. The kind of tool that would not raise a flag even if someone had reviewed it, because on the surface, it looked fine.

But when attackers compromised Context.ai's OAuth application, they didn't need to breach Vercel directly. They had something better: a legitimate, trusted session inside a real employee's Google Workspace account. From there, they moved into Vercel's internal environments. They read environment variables from customer configurations. They accessed API keys, database credentials, signing keys, and authentication tokens.

The breach wasn't loud. It wasn't dramatic. It was quiet, lateral movement through a trusted connection, the kind that most detection tools are not designed to catch, because the access looked completely normal.

Now think about the scenario we described above. The marketing tool with access to your Google Drive. The finance AI with access to your accounting software. The CRM integration your sales team connected six months ago.

If any one of those tools is compromised not by you, not by your team, but by an attacker who finds a vulnerability in the AI platform itself, the attacker inherits everything that tool can access. Your data. Your customers' data. Your credentials. All through a connection you didn't know existed.

You don't have to be breached directly. You just have to be connected to something that is.

We saw the same pattern with Rockstar — 78.6 million records taken through Anodot, a cloud monitoring vendor with standing Snowflake access. Zero alerts. With HackerOne — breached through a third-party benefits provider that had been sitting inside their environment unmonitored for weeks. With Crunchyroll — 6.8 million users exposed through a compromised vendor login.

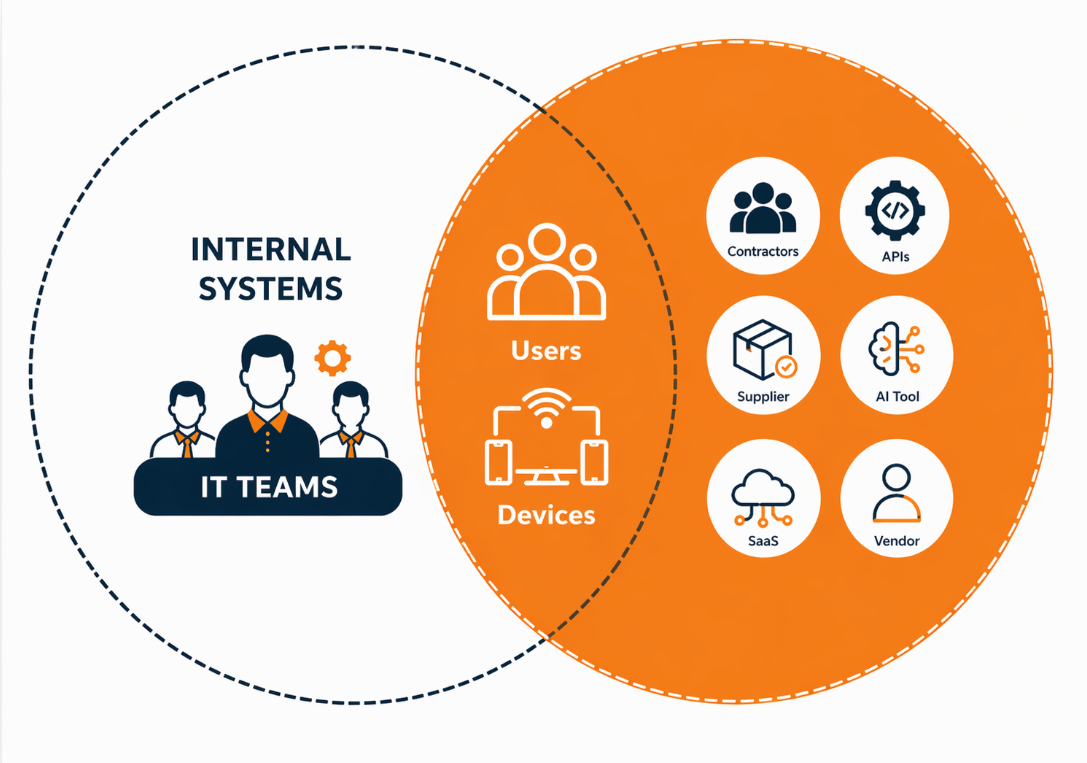

These were not AI breaches, but third-party breaches. But the main scenario is the same. IT Teams have visibility over their environments, but not over their connections.

The departments you're most exposed through right now

Not all Shadow AI carries equal risk. Some connections are low stakes. Others sit directly on top of your most sensitive data. Based on what we see across organisations, these are the areas that consistently carry the highest exposure.

Finance and accounting. AI tools connected to financial software, payroll systems, and banking integrations carry direct access to transaction data, employee compensation records, and payment credentials. A compromise here isn't just a data breach; it's a potential fraud event.

HR and people operations. AI tools used for recruitment, onboarding, and employee management often have access to personal data, employment contracts, salary information, and identity documents. Under GDPR and UAE PDPL, this data carries specific regulatory obligations. A breach here triggers mandatory notification requirements and significant fines.

Sales and CRM. AI assistants integrated with your CRM hold your entire customer relationship history: contact data, deal values, communication records, and contract terms. For an attacker, this data is valuable. For your customers, it's exposure is a serious breach of trust.

Engineering and development. AI coding tools connected to GitHub or internal repositories can access source code, deployment scripts, API keys hardcoded in repositories, and infrastructure configuration. A compromise here can cascade into your production environment.

Executive and PA support. AI tools used by executive assistants often have broad access to calendars, email, document libraries, and communication threads. The access level is high. The security review is typically zero.

In each of these cases, the AI tool itself may be perfectly legitimate. The risk isn't the tool in isolation. It's the access it carries, the data it can reach, and the fact that if it is compromised, that access transfers to whoever compromised it.

What this means for your security posture in 2026

The perimeter model of security assumed that the boundary of your organisation was knowable and defensible. Protect what's inside. Monitor what crosses the line.

That model is not compatible with Shadow AI.

When any employee in any department can create a new integration in sixty seconds — one that has persistent OAuth access to your SaaS environment, that leaves no footprint on your endpoints, that is invisible to your existing monitoring tools — the perimeter is no longer the boundary. The boundary is every connection that has ever been approved with a single click.

This doesn't mean your existing security investments are worthless. It means there is a layer they were not designed to cover. And that layer is growing faster than any other part of your attack surface right now.

As we covered in Your AI Tools Have More Access Than You Think, the access problem is often invisible until something goes wrong. The organisations that came out worst from the breaches we've tracked this year were not the ones with the weakest security; they were the ones with the biggest blind spots.

The question for every security leader right now is not whether Shadow AI exists in their environment. It does. The question is whether they can see it.

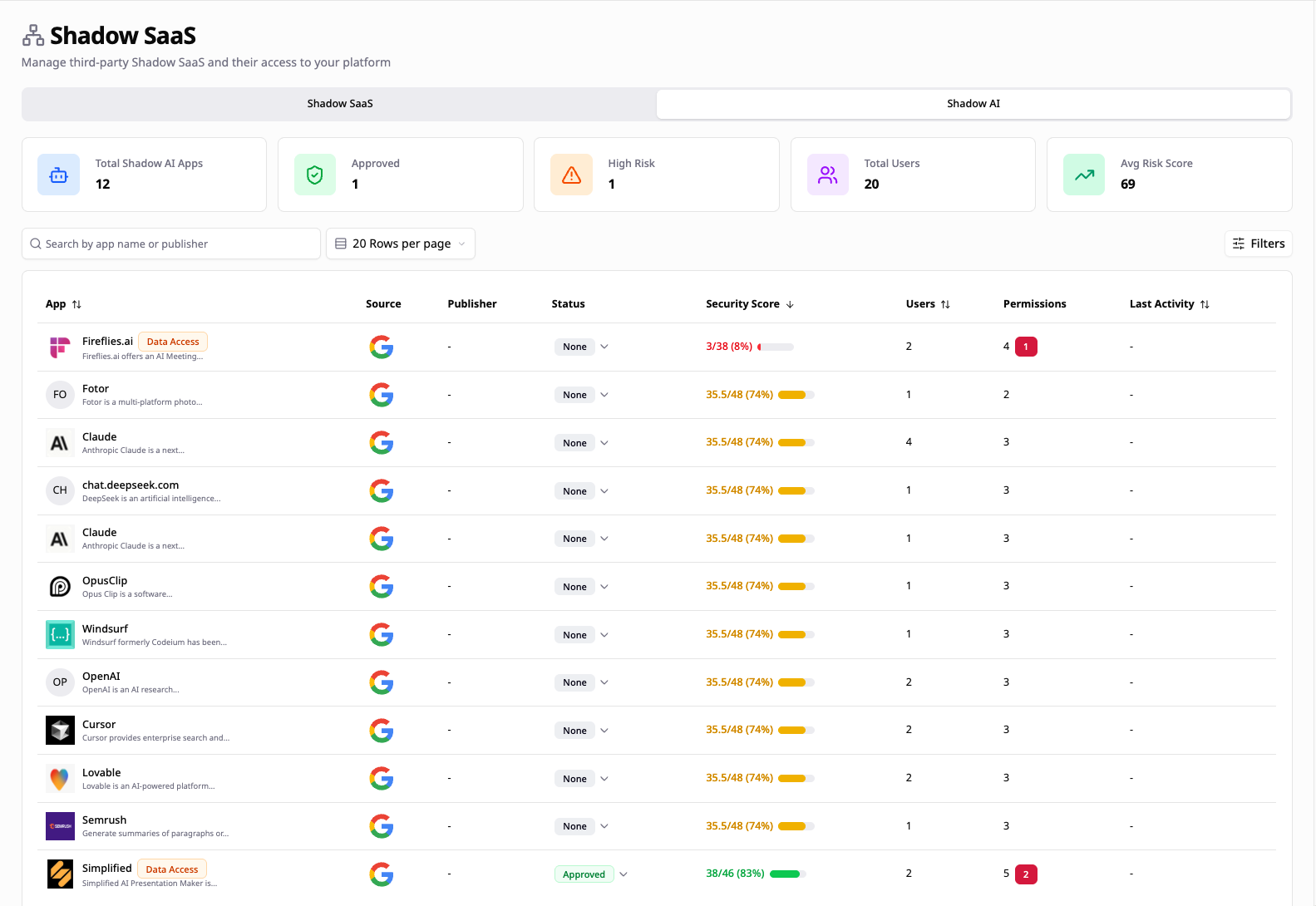

How FrontierZero sees what everything else misses

Most security tools monitor what they already know about. FrontierZero maps what nobody told it to look for.

When an employee connects an AI tool to your Google Workspace or Microsoft 365, approved or not, we see it. Who added it. When. What access it was granted. Not in a monthly report. In real time.

But that's just the starting point.

Every connected tool has a normal. It runs at certain times. It accesses certain data. It behaves within a predictable range. FrontierZero learns that baseline, using our Pattern of Life framework, and surfaces the moment something changes.

The tool that quietly read configuration files for three months suddenly starts pulling records at a volume. The user who connected it logs in from a country they've never accessed from before with a new device and no VPN. An integration that had read-only access yesterday now appears to be requesting more.

None of that triggers a traditional alert. Against a known baseline, it's a signal.

That's Pattern of life monitoring. A single pane of glass across your entire SaaS environment. Every AI tool, every OAuth connection, every identity with standing access is being monitored with continuous behavioural context underneath it all.

The Vercel breach moved quietly through a trusted connection that looked completely normal. The only way to catch something like that is to know what normal looks like first.

Closing thoughts

Vercel will recover. They have the resources, the team, and the public profile to manage the fallout. Most organisations dealing with a breach of this kind do not have that runway.

The difference between an organisation that catches this early and one that finds out from a BreachForums post is not budget. It is not team size. It is visibility.

Knowing what AI tools are connected to your environment. What data they can access. Whether any of those connections have already been flagged in threat intelligence feeds. Whether anything is behaving outside its expected pattern.

That picture, when it’s clear, current, and complete, is what makes the difference.

Most organisations don't have it yet. Not because they aren't trying. Because the tooling to get there hasn't been part of the standard security stack until now.

Get your free Shadow AI Report and see every approved and unapproved AI and SaaS connection in your environment, before someone else finds them first.